Supported codecs need to be indicated by checking the boxes in Enable hardware decoding for and Hardware encoding options.

Select a valid hardware acceleration method from the drop-down menu and a device if applicable. Hardware acceleration options can be found in the Admin Dashboard under the Transcoding section of the Playback tab. The current state of hardware acceleration support in FFmpeg can be checked on the rpi-ffmpeg repository. Jellyfin will fallback to software de/encoding for those usecases. This decision was made because Raspberry Pi is currently migrating to a V4L2 based hardware acceleration, which is already available in Jellyfin but does not support all features other hardware acceleration methods provide due to lacking support in FFmpeg. Video Scaling & Format conversion (optional)Īs of Jellyfin 10.8 hardware acceleration on Raspberry Pi via OpenMAX OMX was dropped and is no longer available. The transcoding pipeline usually has multiple stages, which can be simplified to: Raspberry Pi Video4Linux2 (V4L2, Linux only) Intel/AMD Video Acceleration API (VA-API, Linux only) The supported and validated video hardware acceleration (HWA) methods are: It enables the Jellyfin server to access the fixed-function video codecs, video processors and GPGPU computing interfaces provided by vendor of the installed GPU and the operating system. The Jellyfin server uses a modified version of FFmpeg as its transcoder, namely jellyfin-ffmpeg.

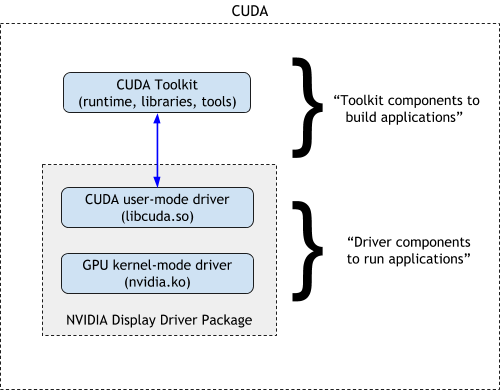

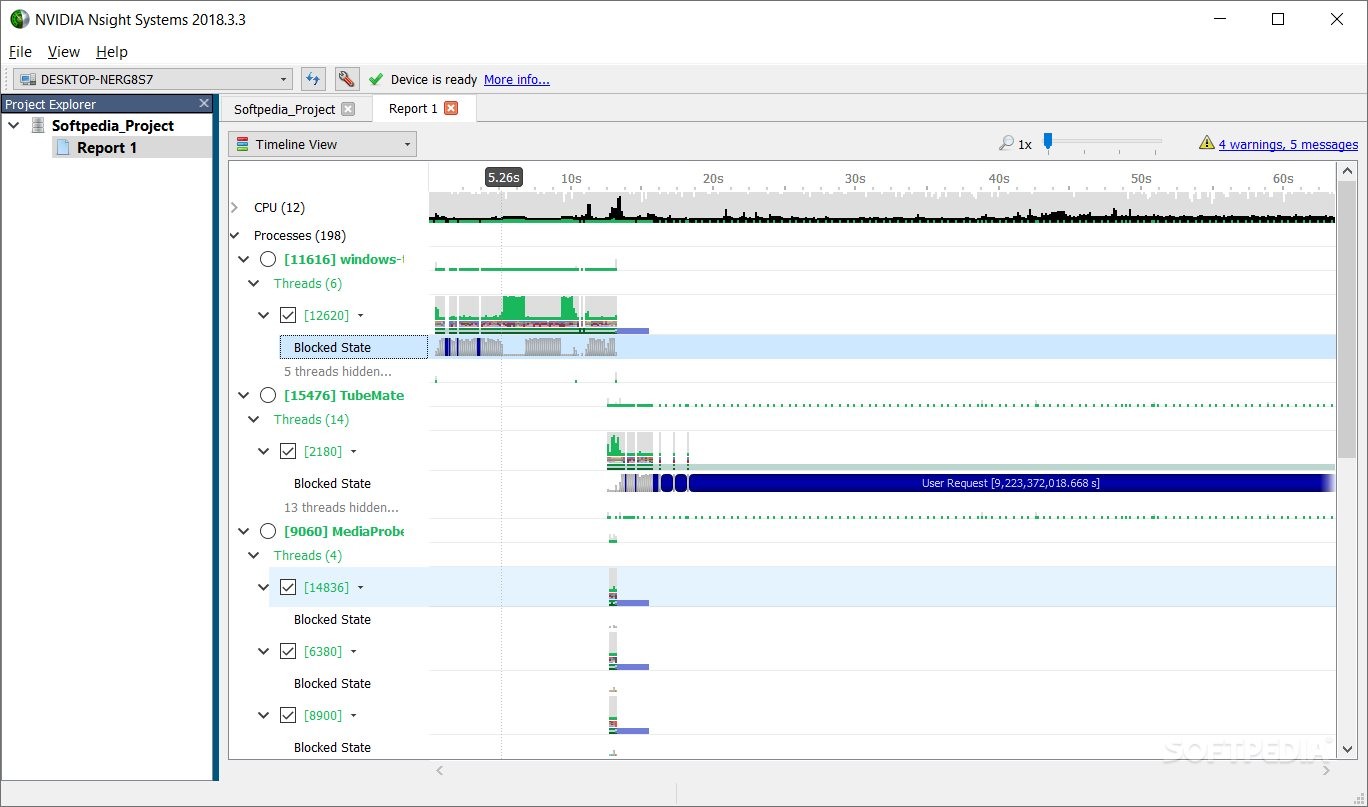

Lib folder.The Jellyfin server can offload on the fly video transcoding by utilizing an integrated or discrete graphics card ( GPU) suitable to accelerate this workloads very efficiently without straining your CPU. In the build/opencl-1.2-stubs folder into the Additionally, copy the OpenCL libraries present After theīuild is complete, rename the build folder containing the See the ARM Compute Library documentation for version requirements. When building the Compute Library, enable OpenCL support in the build options. You can also find information on building the library for CPUs in See instructions for building the library on GitHub ®. Library on either your host machine or directly on the target hardware. Instead, build the library from the source code. Incompatible with the compiler on the ARM hardware. Do not use a prebuilt library because it might be This library must be installed on the ARM target hardware. GPU Coder does not support generating CUDA code by using CUDA Toolkit version 8. Provided in Permission issue with Performance Counters (NVIDIA). ToĮnable GPU performance counters to be used by all users, see the instructions From CUDA Toolkit v10.1 onwards, NVIDIA restricts access to performance counters to only admin users. Issues when executing the generated code from MATLAB as the C/C++ run-time libraries that are included with the MATLAB installation are compiled for only the supported version ofĭepends on profiling tools from NVIDIA. Therefore you can generate CUDA code with other versions of GCC. The nvcc compiler supports multiple versions of GCC and It is recommended to select the default installation options that includes See, CUDA Toolkit Documentation (NVIDIA). Recommended that you follow the CUDA Toolkit documentation for detailed information on compiler, libraries,Īnd other platform specific requirements. Nvcc compiler relies on tight integration with the hostĭevelopment environment, including the host compiler and runtime libraries. Japanese characters, GPU Coder does not work because it cannot locate code generation library If MATLAB is installed on a path that contains non 7-bit ASCII characters, such as To install the support packages, use Add-On Explorer in MATLAB and want to check which other MathWorks products are installed, enter ver in the MATLAB Command Window. Jetson™ and NVIDIA DRIVE ® Platforms (required for deployment to embedded targets such as NVIDIA Jetson and Drive).įor instructions on installing MathWorks ® products, see the MATLAB installation documentation for your platform.

GPU Coder Interface for Deep Learning support package (required for deep learning). Simulink ® (required for generating code from Simulink models).ĭeep Learning Toolbox™ (required for deep learning).Ĭoder (required for generating code from Simulink models).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed